Cayley Hamilton Theorem states that if $A$ is an $n \times n$ matrix over the field $F$ then $p(A) = 0$.

We note that $p(\lambda) = \det(\lambda I - A)$. Hence, why can't we just substitute $\lambda$ with $A$ and directly prove Cayley-Hamilton Theorem by saying that $p(A) = p(\lambda) = \det(\lambda I - A) = \det(AI - A) = 0$?

$\endgroup$ 75 Answers

$\begingroup$There is another way to see that the proof must be flawed: by finding the interesting consequences this proof technique has. If the proof would be valid, then we would also have the following generalisation:

Faulty Lemma. Suppose that $A$ and $B$ are $n\times n$ matrices. Let $p_A$ be the characteristic polynomial for $A$. If $B - A$ is singular, then $B$ must be a zero of $p_A$.

Faulty proof: We have $p_A(B) = \det(BI - A) = \det(B - A) = 0$.$$\tag*{$\Box$}$$

This has the following amazing consequence:

Faulty Corollary. Every singular matrix is nilpotent.

Faulty proof: Let $B$ be a singular matrix and let $A$ be the zero matrix. Now we have $p_A(\lambda) = \lambda^n$. Furthermore, by the above we have $p_A(B) = 0$, because $B - A$ is singular. Thus, we have $B^n = 0$ and we see that $B$ is nilpotent.$$\tag*{$\Box$}$$

In particular, this proves that we have $$ \pmatrix{1 & 0 \\ 0 & 0}^2 = \pmatrix{0 & 0 \\ 0 & 0}. $$ This comes to show just how wrong the proof is!

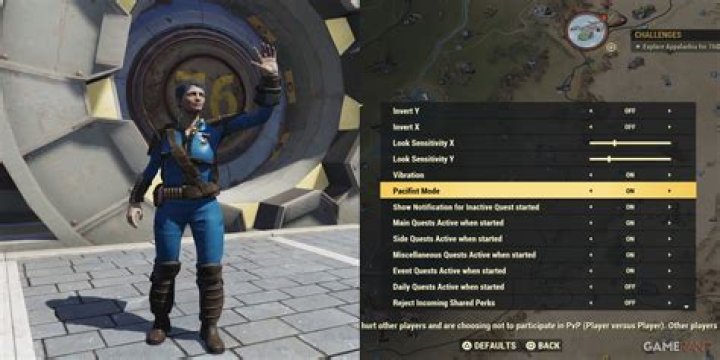

$\endgroup$ 3 $\begingroup$If $$A = \begin{bmatrix} 1 & 2 \\ 3 & 4 \end{bmatrix}$$ then $p(\lambda)$ is the determinant of the matrix $$\lambda I - A = \begin{bmatrix} \lambda - 1 & -2 \\ -3 & \lambda - 4 \end{bmatrix}.$$ Now I plug in $A$ for $\lambda$ and get $$\begin{bmatrix} \begin{bmatrix} 1 & 2 \\ 3 & 4 \end{bmatrix} - 1 & -2 \\ -3 & \begin{bmatrix} 1 & 2 \\ 3 & 4 \end{bmatrix} - 4 \end{bmatrix}$$ but I don't know what that is, and I certainly don't know how to take its determinant.

So the reason you can't plug into $\det(\lambda I - A)$ is because that expression only makes sense when $\lambda$ is a scalar. The definition of $p(\lambda)$ isn't really $\det(\lambda I - A)$, the definition of $p(\lambda)$ is that it's the polynomial whose value on any scalar equals the value of $\det(\lambda I - A)$.

On the other hand I could define a function $P(\lambda) = \det(\lambda - A)$ where I'm now allowed to plug in matrices of the same size as $A$, and I certainly would get zero if I plugged in $A$. But this is a function from matrices to numbers, whereas when I plug matrices into $p(\lambda)$ I get as output matrices. So it doesn't make sense to say that these are equal, so the fact that $P(A) = 0$ wouldn't seem to imply that $p(A) = 0$ sense $P$ and $p$ aren't the same thing.

$\endgroup$ 3 $\begingroup$Remember that there is a difference between $p(x)$ where $x$ is scalar and $p(A)$ where $A$ is a matrix, the next thing you should notice is that if your deduction is true, then $p(A)=0$, the left hand side of this equation is a matrix, while the right hand side is the scalar $0$.

What Cayley-Hamilton theorem says is that $A$ satisfies its own characteristic polynomial. If you have worked with minimal polynomials before, the proof of this statement is a simple task (given all of the previous work, obviously).

$\endgroup$ 1 $\begingroup$The original question was: Can i write $\det(I A - A) = 0$ in a meaningful way? Yes, if the first $A$ is consider as a scalar in the ring $F[A]\subset M_n(F)$, and the second one as the matrix representing $A$. And the reason this works is, indeed, that $A$ is an eigenvalue of the matrix $A$. How is this so? Details are below. Note that to make it work we need to work with modules, since the coefficients will be a ring containing $F$.

We'll start with some easy statements:

Let $k$ be a field, and $(a_{ij})$ an $n\times n$ matrix with elements in $k$ and $\lambda \in k$ so that there exists an element $v \in k^n$, $v$ nonzero, with $(a_{ij}) \cdot v = \lambda \cdot v$. Then $\det( (a_{ij}) - \lambda I ) = 0$. Indeed, the matrix $(a_{ij}) - \lambda I$ is not invertible.

Same conclusion, if we substitute $k$ with a $k$-vector space $V$, and there exists a nonzero element $v$ in $V^{ n}$ with $(a_{ij})\cdot v = \lambda \cdot v$.

More generally: $k$ a commutative ring, $V$ a $k$-module, $(a_{ij})$ a matrix in $M_n(k)$ and $\lambda \in k$ and $v$ in $V^n$ so that $(a_{ij})\cdot v = \lambda \cdot v$. Then $\det((a_{ij}) - \lambda I) \cdot v = 0 \in V^n$. Use the adjoint matrix.

Let now $A$ an $n\times n$ matrix. Let $k \colon = F[A]\subset M_n(F)$ the commutative algebra generated by $A$. $F^n$ is a $k$-module. Let $e_1$,$\ldots $, $e_n$ the standard basis of $F^n$. We have

$$ (a_{ij}) \cdot \left( \begin{array}{c} e_1 \\ \ldots \\ e_n\end{array} \right ) = \left( \begin{array}{c} A\cdot e_1 \\ \ldots \\ A \cdot e_n\end{array} \right ) $$

The above equation says: $\left( \begin{array}{c} e_1 \\ \ldots \\ e_n\end{array} \right )$ in $V^n$ eigenvector for the eigenvalue $A$. It follows that $$P_A(A) \cdot \left( \begin{array}{c} e_1 \\ \ldots \\ e_n\end{array} \right )= \left( \begin{array}{c} 0 \\ \ldots \\ 0\end{array} \right )$$

and therefore $P_A(A)=0$.

$\bf{Added}$: Making sense of $\det(AI - A)$:

Consider the example of @Jim: $$A= \left[\begin{array}{cc}1&2\\3&4\end{array} \right]$$

$$\begin{bmatrix} \begin{bmatrix} 1 & 2 \\ 3 & 4 \end{bmatrix} - 1 & -2 \\ -3 & \begin{bmatrix} 1 & 2 \\ 3 & 4 \end{bmatrix} - 4 \end{bmatrix}\ \ \ ?$$

We need to look at scalars as scalar matrices. So we get

$$\begin{bmatrix} \begin{bmatrix} 1 & 2 \\ 3 & 4 \end{bmatrix} - \begin{bmatrix} 1 & 0 \\ 0 & 1\end{bmatrix} & \begin{bmatrix} -2 & 0 \\ 0 & -2\end{bmatrix} \\ \begin{bmatrix} -3 & 0 \\ 0 &-3 \end{bmatrix} & \begin{bmatrix} 1 & 2 \\ 3 & 4 \end{bmatrix} - \begin{bmatrix} 4 & 0 \\ 0 & 4 \end{bmatrix} \end{bmatrix}$$

This $2\times 2$ matrix, with entries in the commutative algebra $F[A]$, has determinant $P_A(A)$, a matrix of the same size as $A$. And it will always the zero matrix.

$\endgroup$ 5 $\begingroup$Actually, you can do what you want, but you have to be very careful about what you mean when you evaluate a polynomial with matrix coefficients at a matrix. For example, if you have a matrix polynomial with square matrix coefficients $A_j$ such as $$ p(\lambda) = A_{0}+\lambda A_{1}+\lambda^{2}A_{2}+\cdots+\lambda^{n}A_{n}, $$ then what do you mean by $p(M)$ where $M$ is a matrix? Do you mean $$ p(M) = A_{0}+MA_{1}+M^{2}A_{2}+\cdots+M^{n}A_{n}, $$ or do you mean $$ p(M)=A_{0}+A_{1}M+A_{2}M^{2}+A_{3}M^{3}+\cdots+A_{n}M^{n}, $$ or do you mean the average of these two? Or do you mean something even stranger where some powers of $M$ are to the left of a coefficient and some are to the right?

And why is this important? Because if you factor a matrix polynomial into two others, say, $$ p(\lambda)=q(\lambda)r(\lambda), $$ then it can happen that $p(M)\ne q(M)r(M)$. Whereas the $\lambda$ commute with the matrix coefficients, $M$ and its powers do not necessarily commute with the matrix polynomial coefficients. To see what I mean, suppose $$ P_{0}+\lambda P_{1}+\cdots+\lambda^{l}P_{l} \\ =(Q_{0}+\lambda Q_{1}+\cdots+\lambda^{m}Q_{m})(R_{0}+\lambda R_{1}+\cdots+\lambda^{n}R_{n}). $$ This has a very specific meaning: $$ P_{0}=Q_{0}R_{0},\\ P_{1}=Q_{0}R_{1}+Q_{1}R_{1},\\ P_{2}=Q_{0}R_{2}+Q_{1}R_{1}+Q_{2}R_{0},\\ \cdots $$ But when you substitute a matrix $M$ for $\lambda$, things don't work out any more. First, you have to choose a left-, a right-, or a mixed-evaluation, and you can see that the above relations are not necessarily preserved during evaluation of the polynomial at a matrix: $$ P_{0}+P_{1}M+P_{2}M^{2}+\cdots+P_{l}M^{l} \\ \ne (Q_{0}+Q_{1}M+Q_{2}M^{2}+\cdots+Q_{m}M^{m})(R_{0}+R_{1}M+R_{2}M^{2}+\cdots R_{n}M^{n}). $$ For example, $P_{1}=Q_{0}R_{1}+Q_{1}R_{0}$ is not enough to guarantee $$ P_{1}M=Q_{0}R_{1}M+Q_{1}MR_{0}. $$ So, factorings of matrix polynomials are not generally preserved under evaluation of polynomials at a matrix $M$, regardless of what convention you choose for evaluating those polynomials.

After trying to discourage you, please note that everything works in the case that you are considering. There is an important special case where everything works: If you use evaluation on the right for $p$, $q$ and $r$, and if $Mr(M)=r(M)M$, then you can move all of the powers of $M$ to the far right in $q(M)r(M)$ to obtain the same thing that you would get if you were to evaluate $p(M)$ on the right. (A similar thing holds for left evaluation.) And this condition is automatically satisfied if the right evaluation $r(M)$ is $0$ because $0$ commutes with everything. Hence, the right evaluation of $p$ at $M$ is $0$ if $p=qr$ and if the right evaluation of $r$ at $M$ is $0$.

Proof of Cayley-Hamilton Theorem: So how does this apply in the Cayley-Hamilton Theorem? Suppose $A$ is an $n\times n$ matrix, and let $A_{i,j}$ be the determinant of the matrix obtained by removing the $i-th$ row and $j-th$ column from $A$. The adjunct matrix $A_{\mbox{adj}}=[(-1)^{i+j}A_{j,i}]$ (note the intentional swap of $i$ and $j$) satisfies $$ AA_{\mbox{adj}}=A_{\mbox{adj}}A = \mbox{det}(A)I, $$ where $\mbox{det}(A)$ is the scalar determinant of $A$ and $I$ is the identity matrix. In particular, $$ (A-\lambda I)(A-\lambda I)_{\mbox{adj}}=(A-\lambda I)_{\mbox{adj}}(A-\lambda I)= \mbox{det}(A-\lambda I)I $$ The matrix $(A-\lambda I)_{\mbox{adj}}$ consists of cofactors of $A-\lambda I$ and, as such, each entry in this matrix is a polynomial in $\lambda$ of order between $0$ and $n-1$. By collecting like powers, $$ (A-\lambda I)_{\mbox{adj}}=Q_{0}+\lambda Q_{1}+\cdots+\lambda^{n-1}Q_{n-1}, $$ where $Q_{j}$ are $n\times n$ coefficient matrices. Therefore, you have $$ (Q_{0}+Q_{1}\lambda + \cdots + Q_{n-1}\lambda^{n-1})(A-\lambda I)=(-1)^{n}p(\lambda)I, $$ where $p(\lambda)=\mbox{det}(\lambda I -A)$ is the characteristic polynomial of $A$. This factoring is preserved by right evaluation at $A$ because the evaluation of $A-\lambda I$ at $\lambda =A$ gives you the $0$ matrix, which commutes with every matrix including $A$. Therefore, the right- and left- evaluations of $p(\lambda)I$ at $\lambda=A$ must give you $0$ (these evaluations are trivially the same as the usual evaluation of a scalar polynomial at a matrix--the coefficients may be thought of as scalar multiples of the identity matirx.) The conclusion is that $p(A)=0$, which is the Cayley-Hamilton Theorem.

Note: the real crux of the matter is that $p(\lambda)I=Q(\lambda)(A-\lambda I)$ so that $A$ is an obvious $0$ of the polynomial on the right and, hence, also of the characteristic polynomial. It is an odd factoring that one obtains where all matrix coefficients of $p(\lambda)I$ are scalar multiples of the identity. So the hard part is found in the explicit adjunct formula for the inverse of a matrix, which is one of several reasons why determinants remain important.

$\endgroup$