I am facing issues with running spark in Jupyter notebook. I have following variables set in /.bashrc

export SPARK_HOME=~/Development/Spark/spark-2.4.4-bin-hadoop2.7

export PATH=$SPARK_HOME/bin:$PATH

export PATH=~/anaconda3/bin:$PATH

export PATH=$PATH:~/.local/bin/

export PYTHONPATH=$SPARK_HOME/python:$PYTHONPATH

export PYSPARK_DRIVER_PYTON=ipython

export PYSPARK_DRIVER_PYTHON_OPTS='notebook' pyspark

export PYSPARK_PYTHON=python3When I type pyspark, I am getting the error

python3: can't open file 'notebook': errno 2 no such file or directory

For me 'jupyter notebook' is opening up a notebook in browser.

How can I fix this?

1 Answer

TL;DR Check that the environment variables

PYSPARK_DRIVER_PYTHONandPYSPARK_PYTHONare not set in spark-env.sh.

I ran into a similar issue after setting up Spark using a book before taking a Pyspark Udemy course.

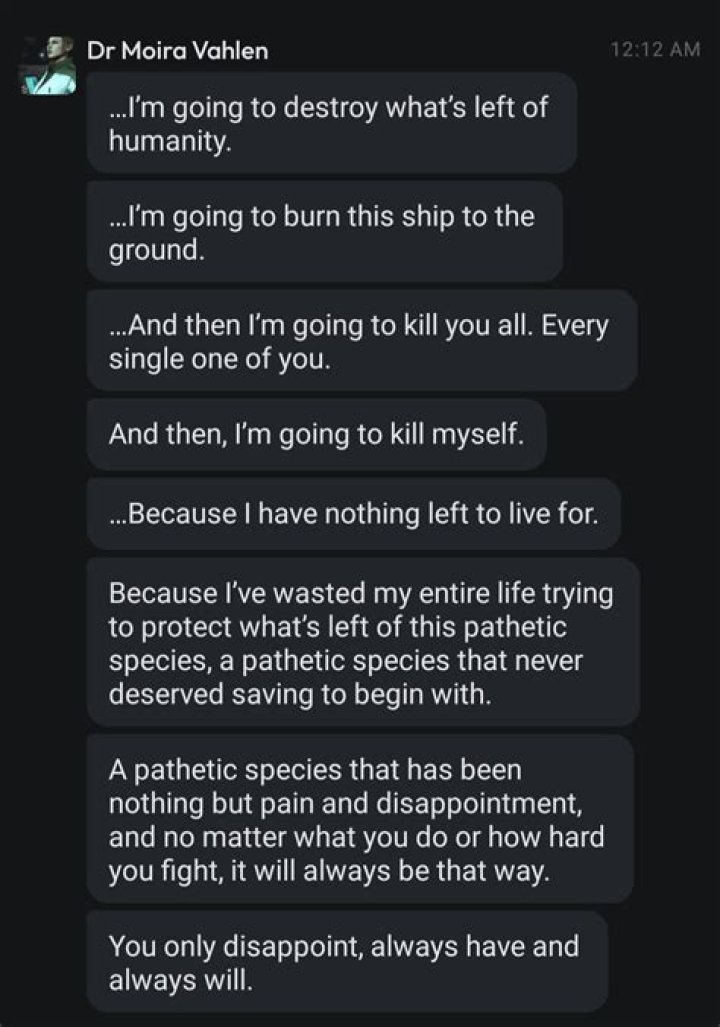

After scouring StackOverflow and troubleshooting, it turned out that I had defined the environment variables PYSPARK_DRIVER_PYTHON and PYSPARK_PYTHON in both my ~/.bashrc and spark-env.sh, as shown below.

~/.bashrc

export PYSPARK_PYTHON=~/anaconda3/bin/python

export PYSPARK_DRIVER_PYTHON=jupyter

export PYSPARK_DRIVER_PYTHON_OPTS=notebookspark-env.sh

PYSPARK_PYTHON=python3

PYSPARK_DRIVER_PYTHON=python3The solution for me was to remove the lines in the spark-env.sh. I was then able to start jupyter notebook by running the pyspark command and utilize pyspark within the notebook. It is expected that the jupyter notebook command should open jupyter notebooks in a browser.

Hope this helps!